Researchers at MIT have designed a wearable device that converts facial movements into electrical signals and helps people with ALS (amyotrophic lateral sclerosis) communicate.

In 2016 Dr. Canan Dagdeviren, an assistant professor at Massachusetts Institute of Technology (MIT), met Stephen Hawking. The world-famous physicist suffered from Amyotrophic lateral sclerosis (ALS), a progressively degenerative condition. As a result, Hawking was unable to speak and required the use of a computer interface that used movements of his cheeks to move a cursor across letters on a screen to form sentences.

However, Dr. Dagdeviren noticed the time-consuming nature of the interface and the bulkiness of the equipment. She was therefore inspired to counter these challenges and create an interface that would allow patients with ALS to communicate flawlessly.

Amyotrophic lateral sclerosis (ALS), also known as Lou Gehrig’s disease

The degenerative disease affects the motor neurons thus, resulting in loss of muscle control. Symptoms generally begin as weakness on one side of the body, slurred speech, inability to grasp objects, or difficulty walking. Eventually, the person is unable to eat, move, speak, and breathe.

According to the ALS Association, over 5,000 people per year are diagnosed with the disease. The average life expectancy of patients is usually two to five years. Although there is currently no cure or treatment for the fatal condition, researchers have made significant progress in designing assistive devices meant to help rehabilitate patients.

A Skin-Like Sensor

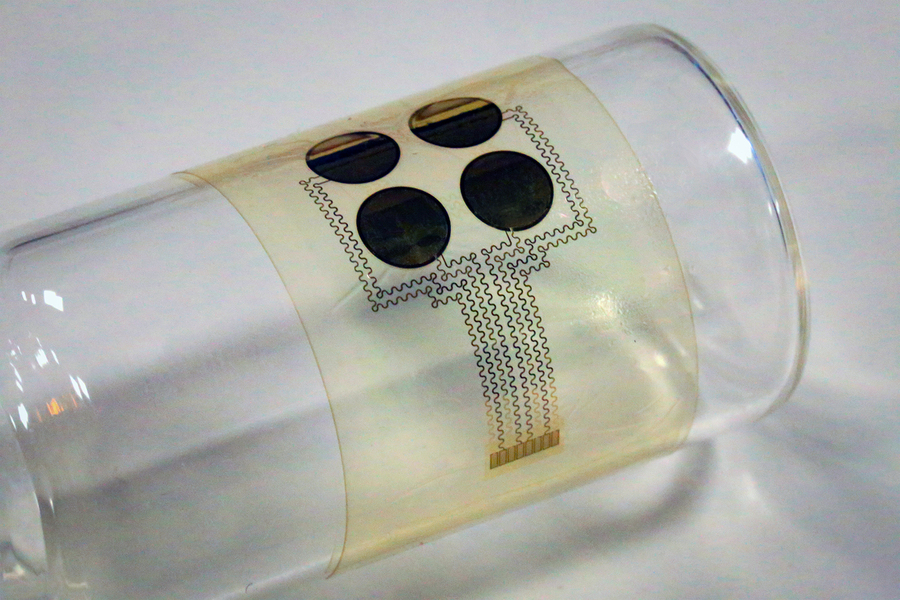

The team, led by Dr. Dagdeviren, designed a stretchable, skin-like, wearable device with the ability to convert minute facial movements into electrical signals. The device consists of four piezoelectric sensors embedded within a thin silicon film.

Not only are our devices malleable, soft, disposable, and light, they’re also visually invisible.

Dr. Canan Dagdeviren, leader of the research team

Another plus point of the device is it’s low-cost. Researchers state that the components are easy to mass-produce therefore, the cost of each device could be as low as $10.

Using measurements of skin deformations, the researchers created an algorithm to distinguish between a smile, open mouth, and pursed lips.

According to the study published in the journal Nature Biomedical Engineering, the device was tested on two ALS patients. It showed an accuracy of 75% in distinguishing between these movements among the patients. Furthermore, the accuracy rate was 87 percent among non-ALS patients.

We can create customizable messages based on the movements that you can do. You can technically create thousands of messages that right now no other technology is available to do. For instance, certain facial movements could be used to transmit specific messages like “l love you” or “I’m hungry”

Dr. Canan Dagdeviren, leader of the research team

In conclusion, the authors of the study believe the device will not only help patients communicate but also track the progression of the disease. They plan to test the device on further patients.

Reference:

Sun, T., Tasnim, F., McIntosh, R.T. et al. Decoding of facial strains via conformable piezoelectric interfaces. Nat Biomed Eng 4, 954–972 (2020). https://doi.org/10.1038/s41551-020-00612-w